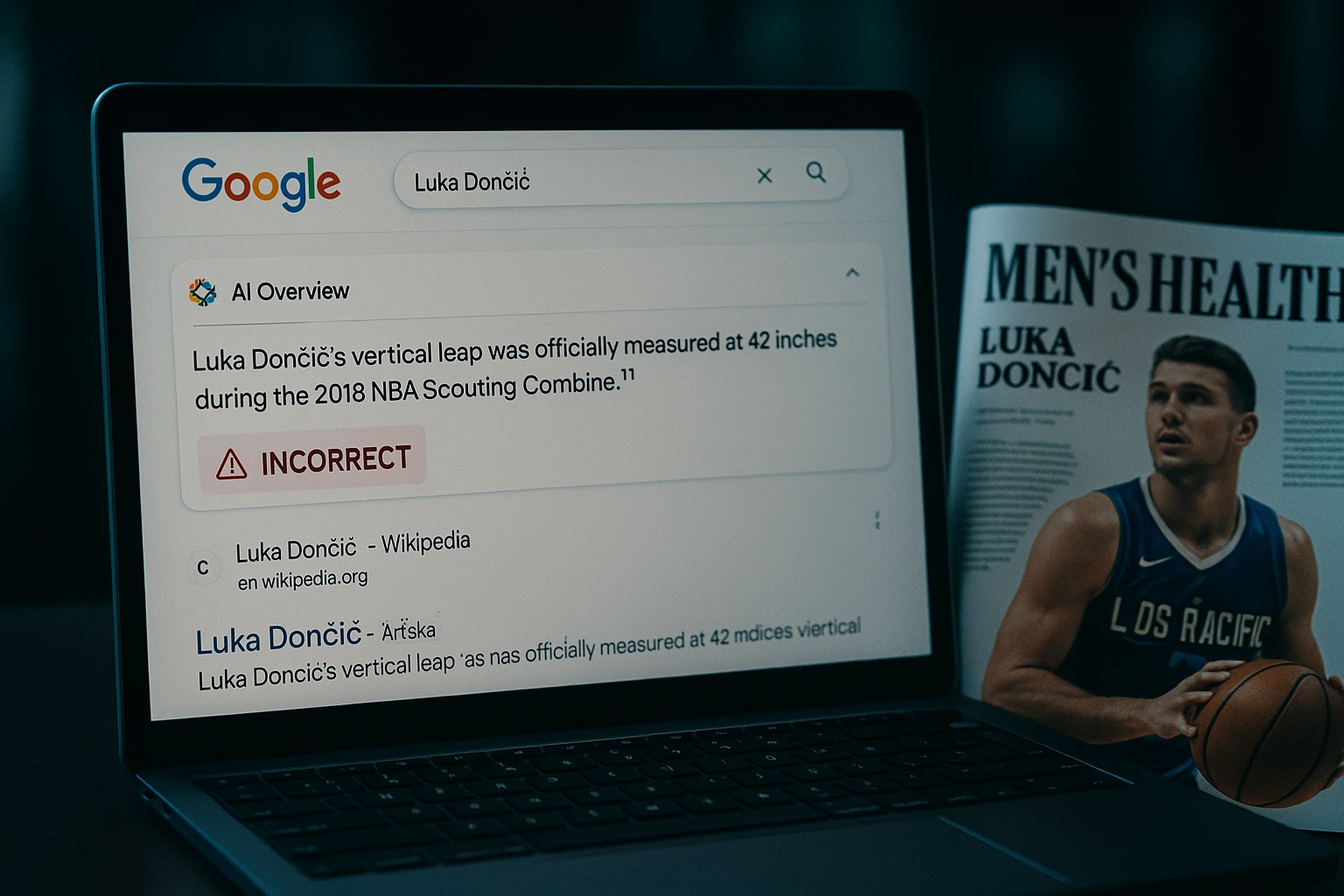

Google AI misinformation has found its way into mainstream media. Men’s Health magazine recently published an article that falsely claimed NBA star Luka Dončić recorded a 42-inch vertical leap at the 2018 NBA Scouting Combine. The problem? He didn’t even attend the event.

The misleading factoid likely originated from Google’s AI-generated summary, which incorrectly attributed the impressive stat to Dončić. In reality, the 42-inch vertical belongs to Donte DiVincenzo, a guard for the Minnesota Timberwolves, who did hit that number at the Combine.

AI summary triggered the misinformation

Nick Angstadt, host of the Locked on Mavericks podcast, first spotted the error and shared a screenshot of Google’s AI Overview summary on social media. The summary stated Dončić’s vertical was “officially measured at 42 inches,” spreading misinformation that Men’s Health repeated in its article.

Both the article and the AI summary have since been corrected. Men’s Health acknowledged the mistake and updated the piece. Google has not issued a public explanation.

The risks of unchecked AI summaries

This incident is another cautionary tale about the dangers of relying on AI-generated summaries without human verification. What should have been a strong feature on Dončić was undercut by a simple but avoidable error.

“It’s just the new version of ‘don’t trust Wikipedia,’” Angstadt wrote, warning users to verify AI-generated claims before accepting them as fact.

A recent Pew Research Center study found that users are far less likely to click on links when AI summaries appear in Google search. Only 8% of visits where summaries were shown resulted in link clicks, and just 1% clicked on cited sources.

Publishers fear traffic loss—and false claims

The low click-through rate raises red flags for digital publishers, who depend on link traffic for revenue. It also highlights how easy it is for misinformation to spread when AI chatbots summarize content without adequate fact-checking.

Men’s Health is owned by Hearst, which has not responded to inquiries. Meanwhile, Dončić’s actual performance continues to speak for itself—no AI summary needed.

Conclusion

The Google AI misinformation incident involving Luka Dončić shows how quickly false claims can spread when AI-generated summaries aren’t verified. As more publishers rely on AI tools, fact-checking remains essential—not optional.

0 responses to “Google AI misinformation spreads in Men’s Health Luka Dončić article”